By Austin ChipFrontier Team The “Greatest Energy Security Challenge in History” is no longer a hypothetical. With the Strait of Hormuz effectively blocked, we are seeing: The Impact on the US Economy While the United States remains a leading producer of domestic energy, it is not immune to a globalized commodity market. The economic “insulation” is thinner than many expected: The Path Forward The duration of this conflict will dictate whether these shifts are temporary or structural. Iranian leadership has already suggested a “new regime” for the Strait of Hormuz, implying that shipping security may not return to pre-war norms anytime soon. How is your industry adapting to these surging energy costs? Are we looking at a permanent shift in global supply chains? #EnergySecurity #GlobalEconomy #OilAndGas #Macroeconomics #Inflation #EnergyTransition

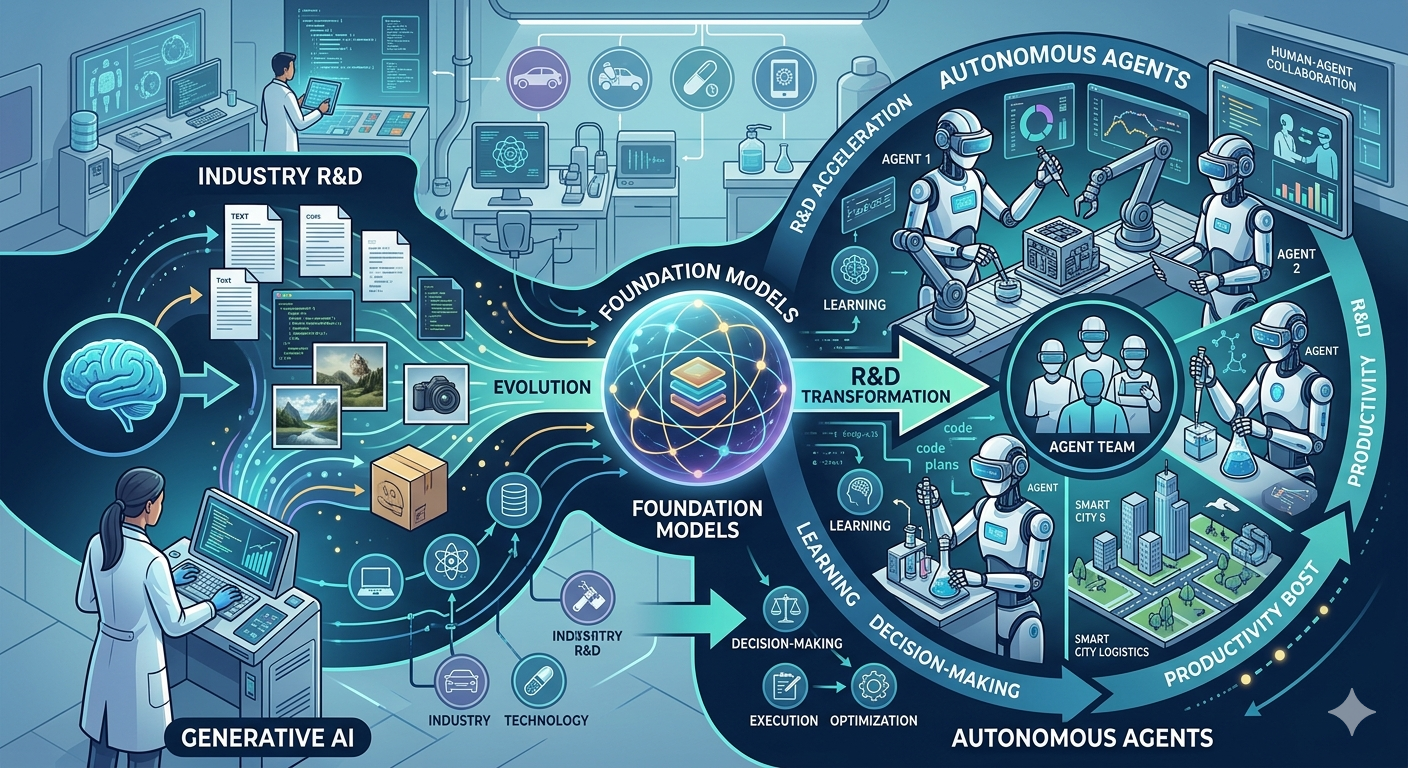

From Generative AI to Autonomous Agents: Industry-Wide R&D Transformation with Foundation Models

Over the last 18 months, generative AI has moved from an experimental novelty to a strategic pillar across nearly every sector. But what’s next? We’re now entering a new phase—where foundation models not only generate insights but autonomously act on them. This shift from passive model outputs to autonomous agents is poised to redefine R&D itself, blurring the lines between ideation, simulation, and execution. A Shift from Generative to Autonomous Foundation models like GPT-4 (175B+ parameters) and Gemini 1.5 are already transforming content, code, and conversation. However, their integration into multi-agent systems introduces memory, planning, and tool usage—enabling closed-loop systems that can iteratively improve designs, synthesize experiments, and even file patents. Autonomous agents are already being tested in domains like chip design, materials discovery, and synthetic biology. Their advantage? Extreme iteration speed and the ability to explore non-intuitive design spaces. In semiconductor R&D, for example, autonomous AI models are helping reduce the design-test-feedback loop from weeks to hours. Why It Matters for R&D Current data suggests that up to 40% of R&D spend in high-tech industries is tied to inefficiencies in simulation, prototyping, and testing. Autonomous agents built on foundation models are tackling this head-on. Consider Intel’s use of generative AI for architecture exploration at advanced process nodes like 3nm and below. These models optimize for power, performance, and area (PPA) trade-offs—compressing decision cycles that once took months. In pharma, generative models paired with autonomous agents are accelerating compound identification and preclinical testing, sometimes by an order of magnitude. Key Implications Increased Throughput: Autonomous agents can run thousands of simulations in parallel, dramatically increasing iteration speed across industries. Cost Reduction: AI-driven R&D workflows reduce reliance on expensive physical prototyping and lab testing. Democratization of Expertise: Foundation models embedded with domain-specific knowledge lower the barrier for non-experts to contribute to high-stakes innovation. IP Acceleration: Some labs report autonomous systems generating novel patentable ideas, potentially shifting the pace and ownership of intellectual property. Talent Strategy Reboot: R&D organizations must now prioritize prompt engineering, model fine-tuning, and agent orchestration as core competencies. Economic Impact According to recent industry benchmarks, organizations piloting autonomous R&D agents in sectors like semiconductors, aerospace, and chemicals are realizing up to 30% faster time-to-market. In multi-billion dollar markets, that translates to billions in potential value capture—and a new competitive frontier defined not just by human talent, but by how well your AI agents perform. In many ways, we’re witnessing the industrialization of cognition. Foundation models are no longer just tools—they’re becoming co-workers. The next S-curve in productivity may not come from automation alone, but from AI that can think, reason, and act across the full innovation lifecycle. What’s Next? As we move from reactive models to proactive agents, the real question is no longer “What can AI do?” but “What should we allow AI to decide?” How will industries govern this new class of autonomous collaborators? #FoundationModels #AutonomousAgents #R&DInnovation #GenerativeAI #Semiconductors #FutureofWork #AIinIndustry

Memory’s Next Shakeout: HBM, AI Demand, and the Return of Pricing Power

The semiconductor industry is in the early stages of a profound memory realignment — and this time, it’s not about who can produce more bits, but who can deliver bandwidth, power efficiency, and AI scalability at unprecedented levels. As High Bandwidth Memory (HBM) becomes the linchpin of accelerated computing, the dynamics of pricing power are shifting back into the hands of memory manufacturers — a reversal not seen since the pre-2018 DRAM boom. HBM: From Niche to Necessity HBM is no longer a boutique offering for high-end GPUs. With AI models like GPT-4 and its successors demanding trillions of parameters and training compute approaching 1025 FLOPs, the need for memory that can keep pace has never been more acute. Traditional DDR and even GDDR memory architectures simply can’t match the bandwidth-to-power ratio required for training and inference at this scale. HBM3 and the upcoming HBM3E promise bandwidths exceeding 1.2 TB/s per stack, with power efficiency improvements approaching 15% over prior generations. These specs aren’t just nice-to-haves — they’re essential for hyperscalers deploying AI clusters at scale. As of Q1 2024, over 70% of new AI accelerator designs include native HBM integration, according to recent industry benchmarks. Why Pricing Power is Back For the past decade, memory pricing has been a commoditized, boom-and-bust cycle — driven by oversupply, inventory corrections, and razor-thin margins. But HBM changes the game. Unlike commodity DRAM, HBM production involves advanced TSV (Through-Silicon Via) stacking, tight thermal envelopes, and high-yield interposer integration. These are not easily ramped by second-tier fabs. Current data suggests that less than five players globally — including SK hynix, Samsung, and Micron — have the technical and capital capability to deliver HBM at scale and at the quality required by tier-one AI vendors. This concentrated supply base, combined with insatiable demand from NVIDIA, AMD, and custom ASIC builders, is creating a virtuous cycle of constrained supply and rising ASPs (Average Selling Prices). Key Insights: HBM3E is the new gold standard for AI compute, with bandwidths exceeding 1.2 TB/s and latency improvements critical for real-time inference. Vertical integration is rising as chipmakers like NVIDIA co-develop memory subsystems to optimize for performance-per-watt. Pricing power is shifting back to memory manufacturers as AI demand outpaces fabrication capacity for advanced memory nodes (1a-nm and beyond). Supply chain risk is increasing due to geopolitical factors and the complexity of advanced packaging required for HBM deployment. Economic ripple effects are likely to hit downstream AI startups and cloud providers, as rising BOM costs could constrain innovation velocity. What This Means for the Industry We’re entering a new memory-centric era of compute. In this era, power efficiency, memory bandwidth, and interconnect latency matter as much — if not more — than just raw transistor count. While TSMC and Intel continue to push 3nm and 2nm nodes, it’s clear that AI performance bottlenecks are shifting away from logic and into memory bandwidth and access patterns. Economic implications? Consider this: If HBM pricing remains firm or accelerates, it could significantly alter the cost structure of AI cloud services. Already, some hyperscalers are evaluating alternative model architectures — such as mixture-of-experts — that reduce memory bandwidth pressure. Others are investing in advanced memory controllers or on-package cache solutions to reduce dependency. According to recent forecasts, the HBM market is expected to grow at a CAGR of over 30% through 2028, outpacing all other DRAM segments. This isn’t just a new product cycle — it’s a structural shift in how value is captured along the AI hardware stack. The Road Ahead As AI workloads scale and edge deployments become more common, will HBM remain the bottleneck — or will new memory architectures (like CXL-attached DRAM or even optical memory) challenge its dominance? The memory shakeout is only beginning, and strategic positioning today will define compute economics for the next decade. How should semiconductor leaders reassess their memory roadmaps in a world where bandwidth is the new transistor? #HBM #Semiconductors #AIInfrastructure #MemoryTechnology #ChipDesign #ComputeEconomics #AIHardware