Over the past decade, the GPU has been the undisputed engine of the AI revolution. From training GPT-scale models to enabling real-time inference on mobile devices, general-purpose GPUs like NVIDIA’s A100 and H100 have defined the pace and possibility of AI innovation.

But a quiet shift is underway—one that’s poised to redefine the hardware foundation beneath the next wave of AI advancements.

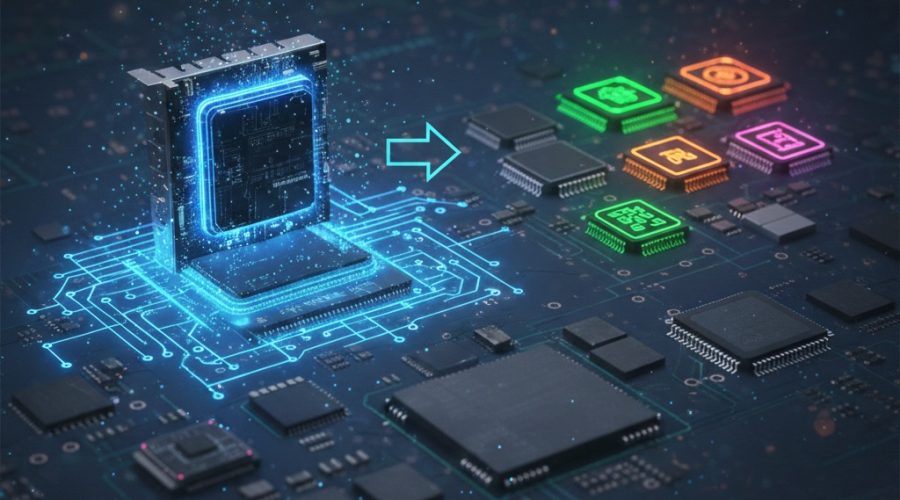

Enter domain-specific accelerators (DSAs), custom-built silicon optimized for targeted AI workloads. These are not just chips—they’re purpose-built economic levers, engineered to break the performance-cost tradeoffs that GPUs are now struggling to maintain.

The Bottleneck with GPUs

GPUs are incredibly powerful, but they are inherently generalized. Their architecture, while parallel and high-throughput, is not always optimal for the increasingly heterogeneous and latency-sensitive needs of modern AI systems.

Training LLMs like GPT-4 and Gemini requires immense computational throughput—often measured in FLOPs (floating point operations per second)—but inference at scale demands low-latency, power-efficient silicon optimized for specific matrix operations and memory access patterns.

GPUs built on sub-10nm nodes (e.g., TSMC’s 5nm and 4nm) face rising costs and diminishing returns, particularly when silicon area is not being fully utilized for a given AI task. This inefficiency is opening the door for more specialized solutions.

Rise of Domain-Specific Accelerators

Companies like Google (TPU v4), Amazon (Inferentia), and startups like Cerebras and Tenstorrent are building DSAs that challenge the GPU hegemony. These chips are designed with hardwired pipelines for specific tensor operations, optimized memory hierarchies, and reduced instruction set complexity.

According to recent industry benchmarks, a well-optimized DSA can deliver up to 3x better performance-per-watt and 2x lower latency for inference workloads compared to leading GPUs.

Most notably, DSAs enable significant cost advantages in hyperscale data centers and edge environments. Current data suggests that deploying DSAs at scale could reduce AI operational expenses by 20–40% over a 3-year TCO model.

Key Insights

- Silicon Economics Shift: As 3nm and below node costs soar, DSAs offer better transistor utilization per dollar spent.

- Inference Optimization: Latency-critical applications (e.g., real-time translation, autonomous driving) benefit immensely from DSA architectures.

- Energy Efficiency: DSAs provide improved performance-per-watt, a key metric as sustainability becomes a boardroom priority.

- Vertical Integration: Tech giants are bringing hardware in-house to control the full AI stack—from model to silicon.

- Economic Moats: Custom hardware is becoming a strategic differentiator in the AI value chain.

The Strategic Implication

This isn’t about replacing GPUs—it’s about complementing them. The future will be heterogeneous: GPUs for flexible training, DSAs for hyper-efficient inference, and neuromorphic or photonic chips for frontier use cases.

For semiconductor investors, cloud providers, and AI startups, this signals a phase transition. Competitive advantage is shifting from raw compute to tailored compute. The winners in this next era will be those who co-design their models and silicon architectures.

What’s Next?

As the AI ecosystem evolves from billion-parameter models to trillion-parameter architectures, will your compute strategy scale?

Let’s discuss what this shift means for your business—whether you’re building models, hardware, or the infrastructure that connects them.

#AIHardware #Semiconductors #DomainSpecificAccelerators #MachineLearning #EdgeComputing #LLM #TechLeadership